Enabling Responsible AI Use in Machine Learning

Working in machine learning and AI, we often see some incongruencies in the input versus the desired output. Responsible AI and an ethical approach to machine learning dictate that business leadership walk into the process with their eyes wide open.

Artificial intelligence and machine learning are still in their nascent phase, but with the rapid growth and expansion happening every day in the field, the implications will soon outgrow us. Every AI and ML project leader must take responsible AI seriously.

The biggest question we should all be asking is: what are we really doing with AI and ML, and will the intended outcome fit our expectations and align with our ethics?

Understanding Why Responsible AI is Crucial for the Future (and Now)

Digging into the responsible AI question means that we must understand that it's not a simple A+B=C formula. We often hear business leaders say, "I was trying to use machine learning for this process, and I expected it to give me X, but instead, it gave me Y."

(Or they hand it off to their IT team without fully exploring the possible outcomes until a problem arises.)

Generally speaking, the issue is never the "fault" of the machine but rather the fault of the data and model. AI software is never provably 100% correct. It assumes training inputs are representative of the unseen actual inputs, which vary over time. Code is essential, but data is even more crucial. There must be checks and balances to ensure that existing reliability practices are in place and to put in new rules whenever we undertake a new endeavor.

For example, Amazon faced serious concerns a few years ago when leadership used an algorithm for recruiting new employees. The online retail giant ran into some significant roadblocks (and a legal and PR nightmare) when it came to light that the algorithm “hated” women.

These unplanned biases can show up everywhere in machine learning. A very recent study at the University of Chicago found that AI bias was rife in healthcare algorithms and hospital applications. Not only does this create a double standard of care, but it can become a matter of life and death. When ML bias and AI bias fail to catch outliers and miss unusual signs of illness, patients may miss out on life-saving treatments and interventions.

The need for responsible AI is everywhere. Hospitals, insurance, and healthcare present big areas with heavy ethical implications, but there are also plenty of other places where AI bias and a failure to recognize gaps in algorithms can create dire outcomes.

Imagine you build machine learning to decide whether or not clients will receive a loan. Your team bases the screening on a simple model that takes in 30-50 characteristics about an applicant. It looks at their credit score, financial status, history, plans, and desires. The machine trains with a data set of thousands, even tens of thousands of loans, learning whether they were successful based on those historical characteristics.

The machine learning may end up concluding that “this loan has a 70% chance of success,” or “there’s a 90% chance this loan will be good.” But what we may not be seeing is some baked-in accidental bias. For example, maybe the historical data references the applicants’ gender, so suddenly, 10% fewer loans are going to women. This bias is probably not the intention of your product, but we have to identify it, plan for it, and root it out, to ensure we’re engaging in ethical, responsible AI.

At the end of the day, every time you teach a machine or engage in artificial intelligence, you should be thoroughly looking at the outcome. How do you know what was learned? Was that really what you intended to teach the machine?

Playing with Fire in AI Ethics

Over 75% of CEOs believe that AI is good for society, but an even higher proportion—84% agree that AI-based decisions need to be transparent and explainable to gain consumer and stakeholder trust.

Based on these findings, there's a clear need for C-suite leaders to review the AI practices within their company. We should ask questions, examine and tackle the potential risks, and approach the process with our eyes wide open. Before we go forward, we must address areas where controls and processes are lacking or inadequate.

Companies who go into AI and machine learning irresponsibly are setting themselves up for a crisis. Many companies are "dabbling" in machine learning, unaware that they're playing with fire. They're about to release models into the world that don’t perform as they expect…and it could have a dramatic impact on their business.

Now, the answer isn’t to avoid or fear machine learning or AI. The key is to set up a series of checkpoints and measures to ensure your outcomes are reflective of your business ethics.

InterpretML: A Solution for Responsible AI

In the AI world, we may hear programmers refer to AI models as "black-box" models. Essentially this means, that the input data goes into the black box, where a complex algorithm mysteriously applies some ML “magic” to the set and then arrives at a decision output. Most folks aren’t aware of how the model reached the conclusion, but they’re asked to trust it as accurate (which it likely is, per the formula) and ethical (which it may or may not be).

The simple counter idea is known as the "glass-box" model. The glass-box model is transparent with simple algorithms that can easily be explained. Now, there are some challenges with the glass box model because you may be trading off some accuracy for simplicity and understandability.

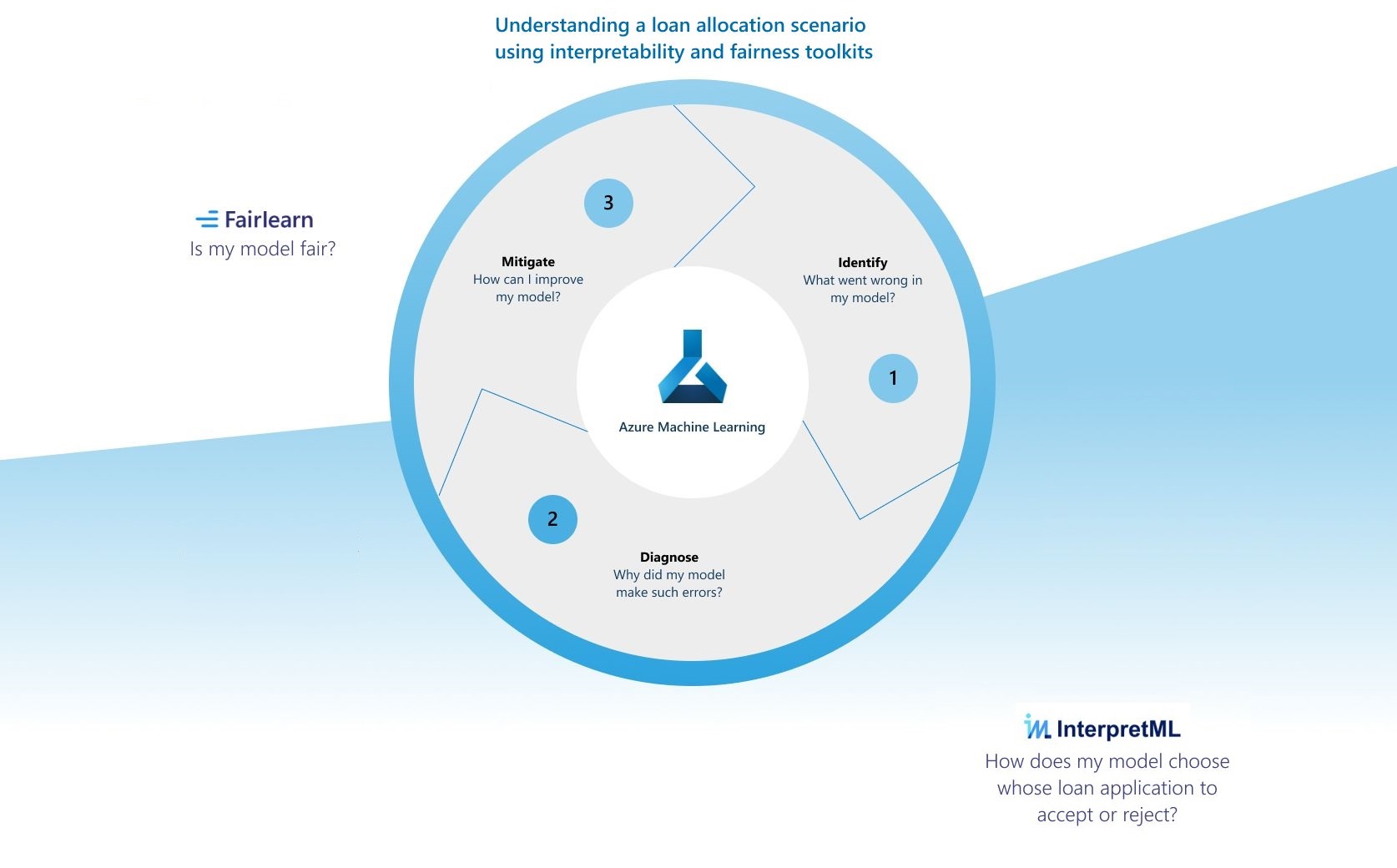

Microsoft InterpretML is an open-source tool embedded in the Azure Machine Learning space that offers straightforward interpretability and a clearer understanding of the machine learning models. InterpretML helps explain predictions and debug models with features and tools to ensure that responsible AI is applied to the scenario. It explores how model performance changes for different data subsets, helps you understand model errors on a local and macro level. You can run an analysis to see the more significant impact and implications of the model.

With a tool like InterpretML, you take the ML model, apply scenarios, and see how it performs. In the loan scenario, as mentioned above, you may want to test the performance by looking at female applicants versus male applicants. You'd also look at how it performs based on demographics like ethnicity, age, income level, and more. What happens when someone who makes $50,000 per year applies for a million-dollar mortgage? Ideally, you'd check both the cases where applications are approved and cases where they aren't approved to determine the parameters and how the data is being read.

One really cool aspect of a tool like InterpretML is that it helps you understand how it's performing in these given scenarios. Then it offers recommendations to help you adjust your model to mitigate the bias. For example, in the loan scenario, the tool may help you determine why you're seeing the unfair partiality toward male applicants and then help you adjust and correct the path to retrain the model.

Machine learning and AI are going to continue to grow at a rapid pace. Not only will they help businesses perform more accurately and more efficiently, but they can process vast amounts of data in a way that wasn't possible in the past. There are many positive roles that AI can take in business to ensure your continued growth and success.

But the responsibility of an ethical approach is incumbent on all of us. As the saying goes, with great power comes great responsibility. There’s also liability both for the reputation of your company and the trust of your customers.

If you're ready to explore how a responsible approach to machine learning and AI can take your company into a stronger tomorrow, reach out today. We’re here to help you move your technology forward.